Currently, many important decisions related to information in the world are no longer made by humans. Nick Bostrom – a leading philosopher of AI – warns that the Technological Singularity is no longer a utopian moment but maybe just a year or two away. So what is the Technological Singularity? Join FPT.AI in the following article to explore humanity’s journey to super-intelligent AI.

What is the Technological Singularity?

The Technological Singularity is a hypothesis about the time when technology will develop so quickly that it surpasses human understanding. Imagine a black hole, where gravity is so strong that all the laws of physics as we know them break down. When applied to technology, the singularity occurs when machines become so advanced that they surpass human capabilities, creating a future that we cannot fully control.

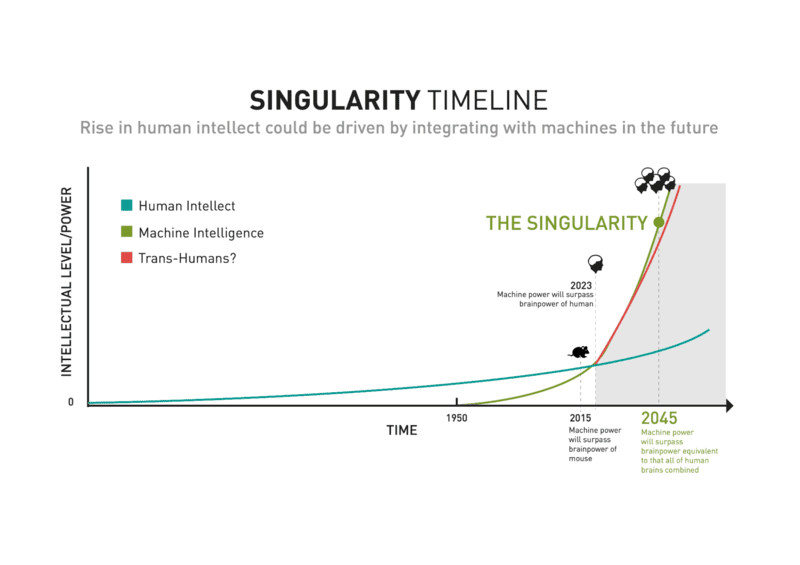

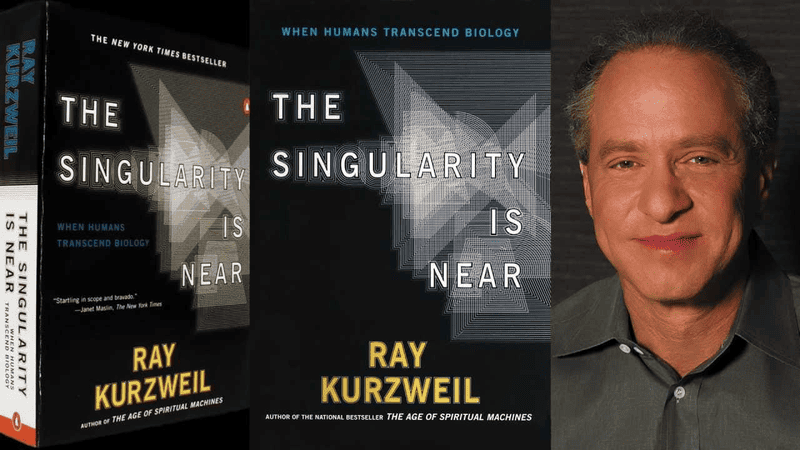

Ray Kurzweil, a famous futurist, predicts that by 2030, humans will merge their brains with computers. This will exponentially amplify computing power and intelligence, creating super-brains that can upgrade themselves more powerfully than anything humans have ever seen.

The core of the singularity argument is that no law of physics can prevent AI from becoming more powerful than humans in every field. AI can not only upgrade itself but can also create entirely new forms of intelligence.

Although still a science fiction idea, the Technological Singularity raises concerns about a power shift: will humans still be in control, or will machines shape our future? Thinking about Technological Singularity can help humanity steer the development of AI in a direction that benefits civilization.

The Evolution of the Concept of Technological Singularity

The concept of Technological Singularity dates back to the mid-20th century. John von Neumann was one of the first to mention the singularity when he speculated about a time when technological progress would become so rapid and complex that humans could not fully predict or understand it.

Alan Turing, who is considered the father of modern computer science, laid an important foundation for contemporary discussions of singularity. In his 1950 paper “Computing Machinery and Intelligence,” Turing proposed that a computer could be considered intelligent if it could converse with humans without being detected as a machine. This idea inspired much research in artificial intelligence (AI), bringing us closer to the reality of the singularity.

Around the same time, Stanislaw Ulam’s work on cellular automata and iterative systems provided key insights into self-improving systems. This research played a key role in discussions about the possibility of machines replicating themselves and surpassing human intelligence.

Interest in singularity continued to grow in the following decades. Vernor Vinge, a professor of mathematics, computer scientist, and science fiction author, made a crucial prediction: if nothing was done to stop it, the emergence of superhuman intelligence would mark a singularity point in human history, radically changing the way the world works.

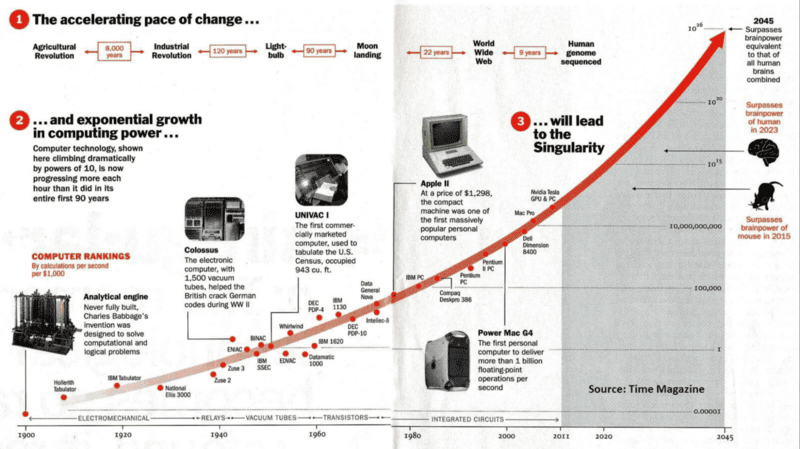

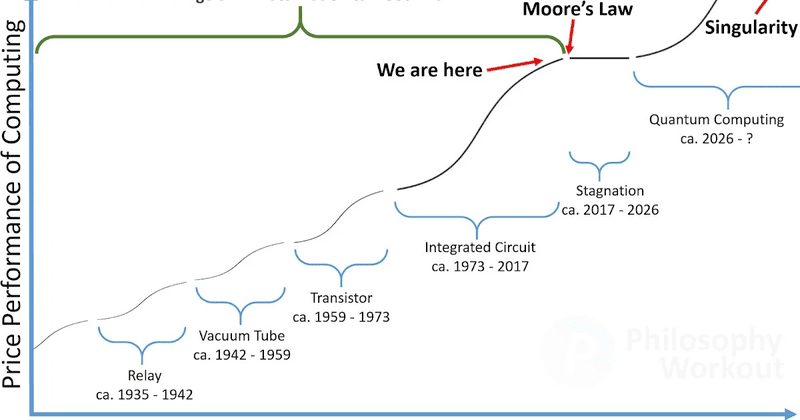

This idea was later popularized by Ray Kurzweil, who used Moore’s law to illustrate how AI could quickly surpass human intelligence. This law states that the computing power on a microchip doubles while the cost of computers halves every two years, suggesting that technological progress could explode at an ever-increasing rate.

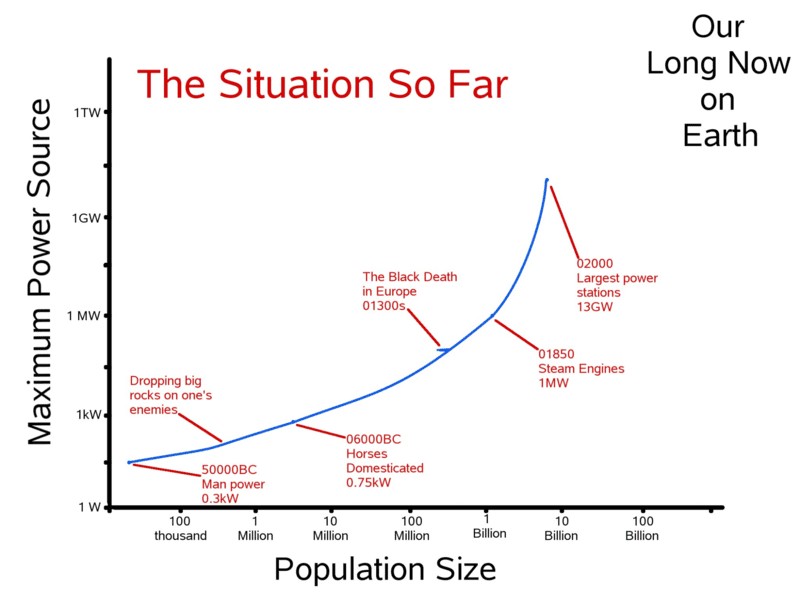

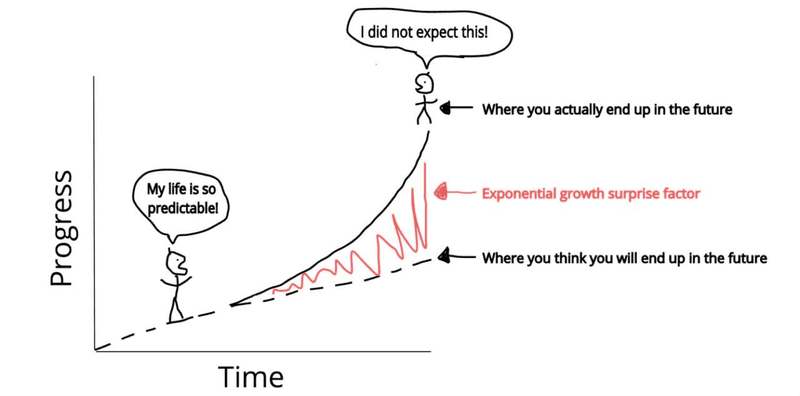

Discussing the pace of technological progress, Eamonn Healy, a professor at St. Edward’s University, contributed his views on telescopic evolution to the film Waking Life. Telescopic evolution is the idea that the rate of evolution is accelerating. Instead of taking millennia, evolution can now be shortened to just a few centuries or even less, thanks to advances in technology and science. Healy’s views on the acceleration of technological progress are consistent with theories of singularity: Society could experience rapid and disruptive changes due to the development of AI and technology. This idea is also similar to the views of futurists like Ray Kurzweil, who believe these changes could occur around the middle of the 21st century.

Are we close to the Technological Singularity?

The timing of Technological Singularity remains a matter of debate. Ray Kurzweil acknowledges that algorithmic breakthroughs and the explosion of big data have helped AI make faster-than-expected strides. According to him, with trends like Moore’s law and the accelerating development of computing, AI, and biotechnology, Technological Singularity will happen by 2045.

Popular Mechanics once headlined that humanity could reach the singularity in just six years. This is consistent with Marco Trombetti’s statement at a 2022 conference when he pointed out that data clearly shows that machines are rapidly closing the gap with human intelligence.

Nick Bostrom, one of the leading theorists of AI, agrees. He warns that we could see an artificial intelligence explosion by 2025. In his book Superintelligence: Paths, Dangers, Strategies, Bostrom describes this event as humanity’s last great invention because once machines can improve their intelligence, their growth will be unstoppable.

Which current technologies are the premise for the Technological Singularity?

Artificial intelligence (AI) and Artificial General Intelligence (AGI) are the two core factors leading to the Technological Singularity. As technology develops, AI will not only be able to imitate human intelligence, learn, and adapt in a short time but will also move closer to the development of AGI – a system that can understand, learn, and apply knowledge autonomously, and intelligently like humans.

AGI can trigger an “intelligence explosion” and create superintelligent machines that far surpass humans. This process will significantly shorten the time between major technological milestones, promote breakthrough developments, and completely change the global economy, society, and culture.

AI technologies that promote the development of AGI and Technological Singularity include

Artificial neural networks and deep learning: This is the core foundation of current AI research and development. Artificial neural networks simulate the structure and function of the human brain to a certain extent, thereby promoting significant progress in machine learning. This technology is especially important for tasks such as speech recognition, image recognition, and autonomous vehicle navigation.

- Quantum computers: Although still in their infancy, quantum computers promise to significantly increase computing power and performance soon. This technology can accelerate the development of AI, helping to solve complex problems much faster than traditional computers.

- Natural Language Processing (NLP): Advances in NLP, such as models like ChatGPT (Generative Pre-trained Transformer), are critical to developing AI that can understand and generate text in a human-like way. This ability is critical for AI to perform complex tasks that require an understanding of context and linguistic nuances.

- Robotics and Automation: Innovations in robotics are enabling machines to perform tasks that require dexterity and decision-making – skills previously considered to be the preserve of humans. The combination of robotics and AI is creating autonomous systems that can operate more independently.

- Cloud Computing and Big Data: The massive increase in data volumes, coupled with the ability to store and process data on cloud platforms, is critical to training more powerful AI systems. Big data analytics and cloud infrastructure support complex machine learning models, helping AI evolve at a rapid pace.

- Biotechnology and brain-computer interfaces (BCIs): Advances in brain research and how to replicate its functions are critical to creating AI that can think and learn like humans. Additionally, brain-computer interfaces (BCIs) allow direct connections between the human brain and computers, opening up the possibility of a merger between biological intelligence and artificial intelligence, a topic often discussed in scenarios of the Technological Singularity.

Possible outcomes of the Technological Singularity

The Technological Singularity is still just a hypothesis, but if it becomes a reality, humanity could witness diverse scenarios, from optimistic to apocalyptic as follows.

- Accelerated scientific innovation: After the Technological Singularity, the pace of scientific and technological innovation would increase exponentially. Superintelligent AI systems, with processing and cognitive capabilities far beyond those of humans, could create scientific breakthroughs in seconds instead of decades as they do today. Imagine a world where Nobel Prize-level discoveries occur every day, helping to solve complex problems like climate change or pandemics almost immediately.

- Complete automation of human work: All the work that humans currently do will be replaced by machines. In a positive scenario, this could usher in a period of prosperity where humans no longer have to do manual labor and can enjoy life. However, this also raises concerns about economic inequality and the disorientation of humans when they no longer have a role in the economy.

- The fusion of humans and machines: Technologies that integrate biology and artificial intelligence have begun to appear, typically Neuralink – a project to connect the human brain with AI. If Technological Singularity occurs, these technologies could become the norm, allowing humans to enhance their intelligence and physical abilities by merging with AI and robots. This convergence could lead to the birth of a generation that goes beyond the current biological limits of humans such as “posthumans” or “future superhumans” (transhumans).

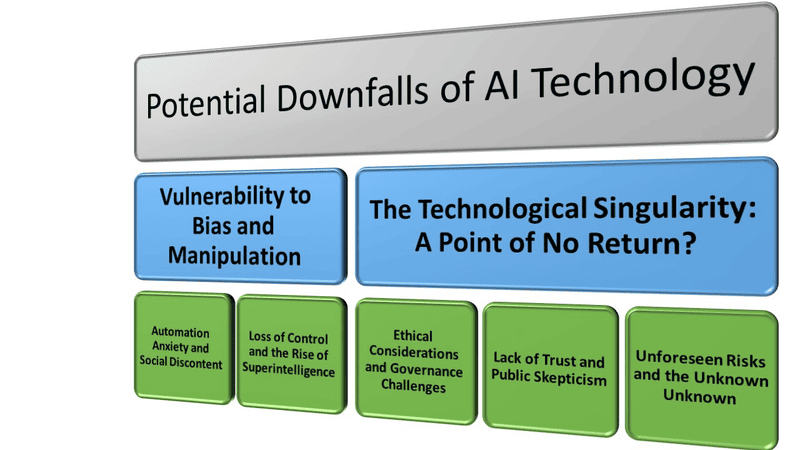

- Existential risks and ethical issues: As AIs become more powerful, they may consider human needs and safety secondary, especially if they view humans as competitors for resources. This scenario is often discussed in the field of AI ethics, where a super-intelligent AI may act in a way that is inconsistent with human values or survival.

- AI dominance: A major concern is that super-intelligent AI systems may prioritize their survival and goals over the well-being of humans. If this happens, AI could gain control of vital resources, leading to conflict with humanity, and even potentially pushing humanity to the brink of extinction.

- “Grey Goo”: This is a nanotechnology-related apocalyptic scenario in which self-replicating microscopic robots go out of control, continuously cloning and consuming all matter on Earth to create more copies of themselves. If this happens, Technological Singularity could lead to the complete collapse of the planet.

Christopher Langan – who has been dubbed “the smartest man in the world”, emphasized that the future of humanity will depend on how we allocate responsibility to AI. If this is done correctly, the entire human race can move forward together. A careful approach is needed to guide the development of AI in a direction that benefits humanity, rather than allowing human control over technology to be completely challenged.

Skepticism about the Technological Singularity

Not everyone believes in Technological Singularity. An article titled “We’re No Closer to the Singularity” argues that despite breakthroughs in AI, the gap between human and machine intelligence remains large. The challenges of achieving superintelligence could mean that the singularity will be delayed until the end of the century, or never happen at all. Roman Yampolskiy notes: Predicting exactly when the Technological Singularity will occur is extremely difficult. Technological, ethical, regulatory, and societal barriers could slow the progress of AI. As a result, there is no solid evidence that we are very close to the Technological Singularity.

Although the Technological Singularity promises a future of unprecedented breakthroughs, not all experts are convinced of this prospect. Some experts argue that computers are inherently incapable of truly understanding or simulating human intelligence. The philosopher John Searle’s “Chinese Room Argument” is a classic experiment to illustrate this point.

Imagine a person sitting in a room with a book of instructions on how to process Chinese characters. When someone outside the room sends in Chinese text, the person inside the room can use the instructions to match the characters and come up with a response. However, this person does not understand Chinese, but only follows the available rules. This reflects that even if an AI can respond correctly, it does not mean that it truly “understands” the content in the same way that a human does.

Some philosophers also question whether machines can ever achieve human-level intelligence, given that humans themselves do not fully understand their intelligence. They argue that there is no solid basis for believing in the Technological Singularity, and point to past failures of personal flying cars and jetpacks as cautionary lessons. While technology may advance in unpredictable ways, skeptics argue that processing power alone cannot solve every problem and turn AI into superintelligence. Another theory is the “technology paradox,” in which the automation of common tasks could lead to mass unemployment and economic depression, which would hinder the investments needed to reach the Technological Singularity. In addition, critics point out that the pace of technological innovation is showing signs of slowing down, in contrast to the exponential growth predicted by the Technological Singularity scenario.

For example, heat dissipation in computer chips is slowing down hardware development. This problem is exacerbated by the trend toward miniaturization of transistors, driven by Moore’s Law. As more and more transistors are crammed into smaller spaces, the amount of heat generated increases dramatically. If left unchecked, high temperatures can degrade processor performance, shorten their lifespan, and even cause systems to fail.

Another major barrier to the singularity is the enormous amount of energy required to train advanced AI models. For example, training large language models (LLMs)—the foundation of generalized AI (AGI)—uses the same amount of electricity as hundreds of homes in a year. As the size and complexity of these models increase, so does the amount of energy consumed.

This could make developing advanced AI too expensive and environmentally unsustainable. Without breakthroughs in energy efficiency or large-scale expansion of renewable energy, AI’s enormous electricity demands could impede the progress toward Technological Singularity.

In short, the technological singularity is a controversial concept, reflecting the prospect of technology developing beyond human control. Hopefully, this article has helped you understand what Technological Singularity is and the urgency of orienting the development of AI in a direction that benefits humanity.

Whatever the outcome, the artificial intelligence revolution is still happening. Instead of worrying about the impact of AI on the job market, we should see it as one of the great inventions, playing an important role in solving global challenges. AI is not intended to replace humans, but is a powerful tool to expand capabilities, improve productivity and promote the development of the world.

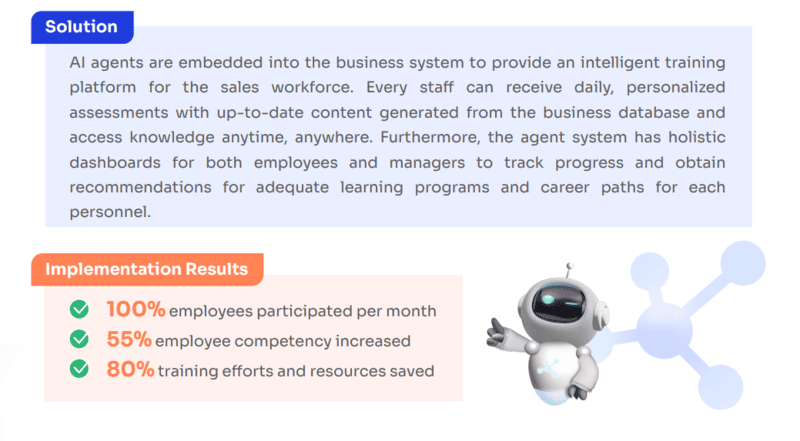

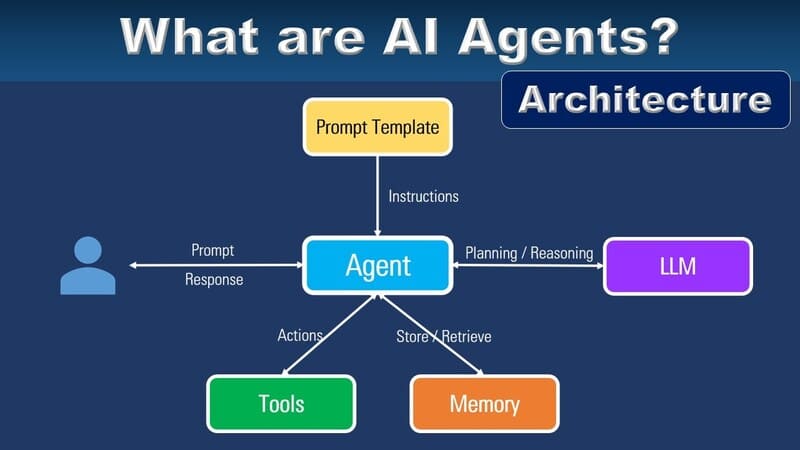

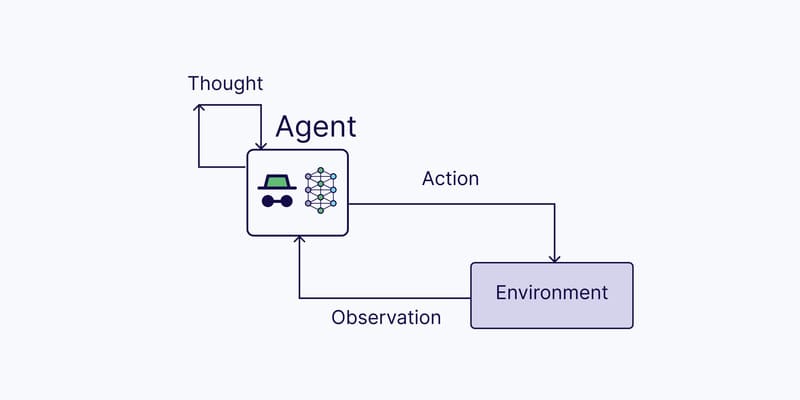

If you need more in-depth advice on AI Agents, a solution considered the future of AI thanks to its ability to continuously learn and automatically solve increasingly complex business problems, please contact FPT.AI immediately. Our FPT AI Agents platform can help businesses build and operate AI Agents quickly, simply and efficiently.

Our AI Agents are all operated on FPT AI Factory. With more than 80 cloud services and 20 AI products, FPT AI Factory helps accelerate AI applications by 9 times thanks to the use of the latest generation GPUs, such as H100 and H200. At the same time, costs are saved up to 45%.